Richard Foord, the Liberal Democrat MP for Honiton & Sidmouth, said: “South West Water is a disgrace. I wrote to the chief executive about spills at Salcombe Regis last October, and received the cliched reply about ‘we take the issues raised seriously’. Rubbish!”

[Meanwhile David Reed MP for Exmouth and Exeter East is presumably still striving to stay “on the same page” as everyone except his constituents while his predecessor Simon Jupp continues reaping the benefits of being Director of Corporate Affairs to the Pennon Group (parent company of SWW) – Owl]

A Times investigation shows there were nearly 15,000 dry-day sewage spills last year. Water companies could face prosecution as swimmers fall ill

Adam Vaughan, George Willoughby www.thetimes.com

Scores of illegal sewage spills into rivers and the sea took place across England on average every day last year, a Times investigation has found.

Documents, data, residents’ accounts, video and analysis show a widespread picture of companies illegally breaching permits regulating sewage spills from about 2,500 outfalls. The findings led regulators to warn that water companies face prosecution if they do not act.

Hundreds of thousands of raw sewage discharges from outfalls each year are legally permitted due to heavy rainfall. However, spills after a dry period of more than 24 hours are defined as illegal by the Environment Agency.

The Times can reveal the true annual extent of those criminal incidents for the first time.

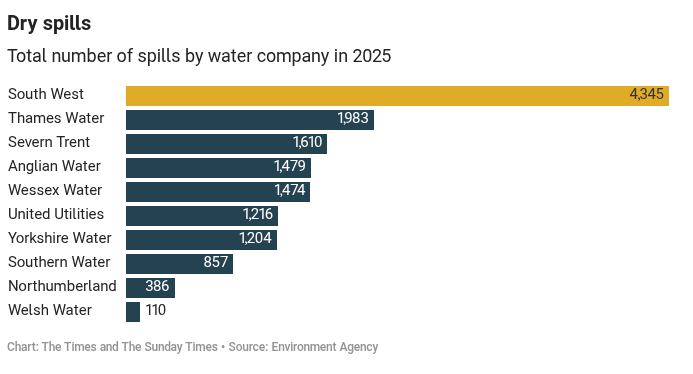

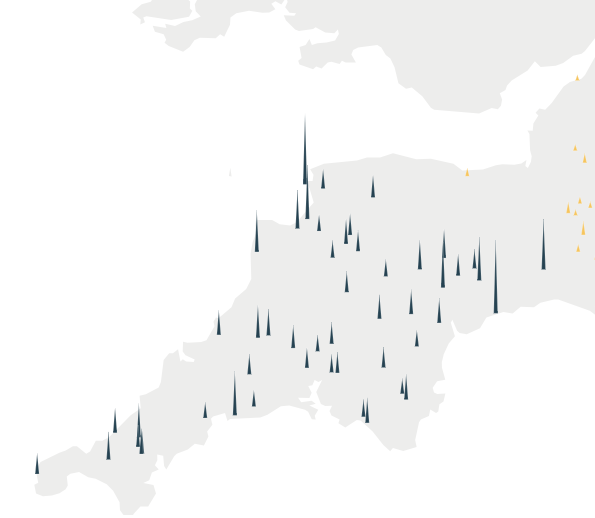

There were almost 15,000 dry-day spills last year. South West Water had more than 4,300 discharges, making it the most serious offender. It was also responsible for the two worst sites in the country, both near beautiful beaches in Devon.

Debt-laden Thames Water, which is at risk of collapsing into temporary nationalisation, caused nearly 2,000. Even Severn Trent, the poster child of best practice in the water sector and the only company to be awarded the top environmental rating by regulators, was responsible for about 1,600.

The spills by ten companies are not just illegal but pernicious. Wild swimmers have come to expect discharges after heavy rain but not after a dry day, putting them at greater risk of falling ill. The pollution is also more concentrated, making it more harmful for fish and other aquatic life.

Feargal Sharkey, the Undertones star and water campaigner, said the figures showed an “absolute exploitation and abuse of both environment and customers”. The angler turned activist, 67, noted the spills only related to those at sewage treatment works, so “god knows” what was happening in the rest of the sewer network.

He said: “Clearly government is now complicit in presiding over one of the greatest acts of criminality and lawbreaking that we’ve witnessed in the modern world in this country. The truth is, government needs to start bringing criminal charges against the executives and boards of those water companies that were knowingly, wilfully disregarding the law.”

Water companies have previously tried to cast doubt when campaigners accuse them of illegal spills, because so many are legally permitted when rain overwhelms capacity. But the dry-day figures are self-reported by the companies and not in dispute.

Since January the sector has been privately submitting the incidents to the Environment Agency, which believes they are overwhelmingly illegal. The numbers were released to The Times under transparency laws.

Detail for South West Water

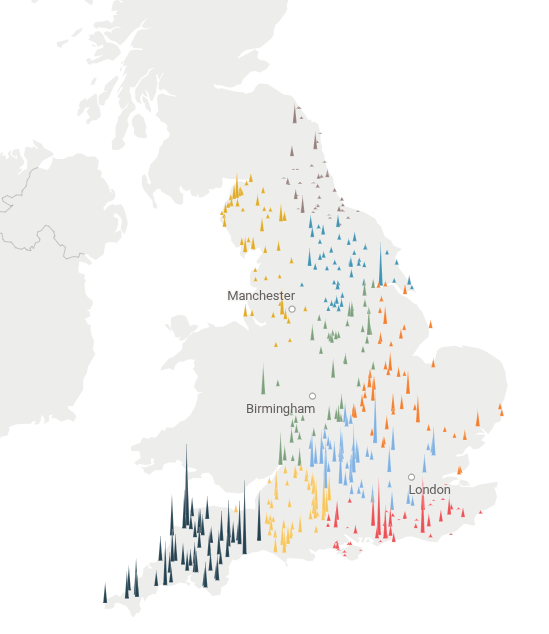

The figures were then further analysed by Surfers Against Sewage, a campaigning charity, which found that raw sewage had been dumped in 142 official swimming spots around England. Four fifths of those bathing waters were usually “good” or “excellent” quality, the two best possible ratings.

Portreath in Cornwall, Fell Foot at Windermere, Cumbria, and Totland Bay on the Isle of Wight were among the worst-affected bathing waters, the campaigners found. During the May-to-September bathing season, when most people swim, 43 of the 142 were hit by a total of 838 hours of sewage spills on dry days.

The charity linked dry spills to 20 people falling sick, based on swimmers submitting reports to its app last year. Reports were logged across 14 locations, and Sidmouth, Gyllyngvase and Westward Ho! had numerous cases. One person, who was forced to take eight days off work, had their sickness attributed by a doctor to poor water quality. One of the 20 reports was for a child under the age of five.

The group counted cases where people swam within 3km of a spill location, covering the duration of the discharge and 24 hours afterwards. Giles Bristow, chief executive of Surfers Against Sewage, said: “Water companies’ brazen disregard for the law is creating a public health emergency right under our noses.”

The Times can disclose that the Environment Agency is ordering water companies to draw up action plans by the end of the year for any sites in 2026 where numerous dry-day spills took place. Phil Duffy, chief executive of the Environment Agency, said: “If we don’t see the progress needed from the industry, we will take further robust action including prosecutions.” He called the agency a “changed regulator”.

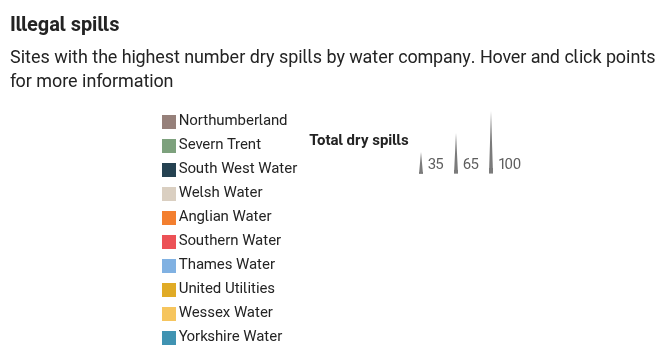

The worst site in England was a sewage treatment works run by South West Water at Salcombe Regis. Just south of the village in south Devon, it discharges into a stream that runs to the sea, where it emerges at Salcombe Mouth beach. It discharged raw sewage into the stream 117 times on dry days last year. They took place despite the company claiming last month it had “drastically” reduced spills at the works, which it had blamed on pipes connected to the wrong drains.

South West Water wastewater treatment works at Salcombe Regis, Devon – Mark Passmore for The TimesSalcombe Regis

Susan Davy, who had been chief executive of South West Water until she retired in December, is understood to live nearby. The company is investigating the sewage treatment works, Environment Agency emails seen by The Times show.

Richard Foord, the Liberal Democrat MP for Honiton & Sidmouth, said the pebble beach was a picturesque spot. He said: “South West Water is a disgrace. I wrote to the chief executive about spills at Salcombe Regis last October, and received the cliched reply about ‘we take the issues raised seriously’. Rubbish!”

An outlet pipe near the South West Water Salcombe Regis treatment Works – Mark Passmore for The TimesSalcombe Regis

The second-worst site was a sewage works at Croyde in north Devon owned by the same company. It discharged 113 times right next to Croyde beach, a popular surfing spot and holiday destination. The coastline is designated a national landscape, a protected area formerly known as an area of outstanding natural beauty. South West Water also had the joint-fourth-poorest site, Abbotsham in north Devon, which had 86 spills into Kenwith stream.

Southern Water’s Lavant sewage treatment works was third, releasing raw sewage 96 times into the River Lavant, one of England’s globally rare chalk streams. Passing through Chichester, the waterway runs to Chichester Harbour. The harbour has several environmental designations recognising its importance for wildlife.

Photos and videos of the Lavant downstream of the Southern outfall, shared by the resident Rob Bailey, show clear signs of “sewage fungus” in the past three years. The fungus is formally known as a biofilm that is harmful to freshwater life.

Nick Backhouse, chairman of the Chichester Harbour Trust, said the state of the Lavant was now “dire” and Southern’s investment programme was a “small drop in the ocean”.

He said: “Chichester Harbour is one of Britain’s most important coastal landscapes, with rare saltmarsh, mudflats and internationally significant birdlife meeting the freshwater of the South Downs’ chalk streams.”

In joint fourth was Thames Water’s Stewkley sewage treatment works. Last year it caused 86 dry-day spills into a tiny stream, Hardwick Brook, in the Rushy Common Nature Reserve near Witney.

The Times recorded underwater video of the stream near the outfall. Upstream, the stones and plants of the riverbed can clearly be seen. Downstream, the water is extremely murky and aquatic flora is barely visible. Like Salcombe Regis and Lavant, the works have a long track record of high raw sewage spills.

Across England as a whole the total duration of dry-day spills last year was 157,803 hours.

The figures come during The Times’s Clean It Up campaign, which calls for better regulation, and after Channel 4’s Dirty Business drama.

Barbara Evans, a professor of public health engineering at the University of Leeds, said sewer blockages and a lack of capacity at sewage treatment works were two immediate reasons for the illegal spills. She added: “But the underlying reasons are climate change-related intensification of rainfall and long-term underinvestment in maintenance and upgrading in the system as a whole.”

Official figures at the end of this month are expected to show a significant fall in total legally permitted sewage spills last year because it was so dry last year. The decrease will be from a record high of 3.61 million hours in 2024.

Water UK, the industry body, said: “No spill is ever acceptable. Water companies are working to end them as fast as possible by tripling investment. Companies are investing £12 billion to halve spills from storm overflows by 2030 including relining and sealing sewers to prevent groundwater infiltration — one of the main causes of dry day spills.”

South West Water said: “Our customers want to see immediate action to reduce the use of storm overflows and this is our absolute priority — we have a record, multibillion-pound investment programme in place and while change on this scale takes time, we are already seeing results and we have committed to complete this work a decade ahead of government targets.”

Thames Water said: “Heavy rainfall can have an instant, adverse impact on our sewer infrastructure. However, over wider catchment areas rainwater and groundwater moves much more slowly, filtering through different geological formations at different rates. As a result, there can be a delay between a period of heavy rain and the increased flow reaching an overflow point, sometimes even occurring during dry weather.”

Steph Pullan, director of asset management at Yorkshire Water, said: “We know our historic performance hasn’t been as good as it should have been and we are focused on delivering improvements for our customers and the environment.”

Southern Water said groundwater levels in Lavant had been exceptionally high. Nick Mills, the director for environment and innovation, said: We’re tankering and pumping around the clock, and when that’s not enough we use storm overflows under Environment Agency oversight to keep communities safe.”